tl;dr

This post is about a recently published paper that takes a critical look at formal proofs as a means to assure the security of software systems, which I authored with Paul van Oorschot. I’m delighted to say that the paper received a Best Paper award at this year’s IEEE Cybersecurity Development Conference (SecDev).

We argue that software proofs are poorly understood, especially outside formal methods researchers. Even amongst experts, however, there is disagreement about their precise value for producing secure software. They also come with certain side effects that until now have remained under-explored.

As an example, if I prove a piece of software secure and have to modify the software to complete the proof, is that modification a definite security improvement? Does it stop a known attack or might I, perhaps, have made the modification just to ease the proof? Can you be sure that the modification hasn’t opened a new security hole unseen by the proof?

We consider these kinds of questions and use a number of examples, drawn from high-profile verification projects, from our own experience and the literature to help answer them. We also raise a range of new research questions that we think should be the focus of future research, to better understand the value and the costs of formal proof as a software assurance mechanism.

Software and Security Proofs

Software is imperfect. Almost all of it is insecure. We have all become inured to everyday data breaches, for instance. Likewise, every security-conscious Internet user implicitly understands that the price of being Internet-connected is being forced to apply an endless stream of software security updates—each one a time sacrifice to the god of security.

How can we more reliably build secure software? Can we build software that is perfectly secure? Assuming we could, how might we demonstrate that a piece of software was invulnerable before we deployed it?

Many researchers—my past self included—have wondered whether logical proof might be an answer to these questions. One way to interrogate a program is by representing it (specifically, its behaviours) in logic and then trying to prove whether its behaviours are secure. By “secure” we really mean whether any of its behaviours allow an attacker to do something that they shouldn’t be able to do, like causing the program to deviate from its intended behaviour (e.g. arbitrary code execution), or reveal sensitive data (data leakage), or modify data that should remain protected (integrity violation).

Talking about all of this requires us to represent the program and its behaviours in logic, as well as the potential actions of the attacker. Researchers and practitioners have been doing this for a while, sometimes with quite spectacular results like discovering security flaws in programs and protocols that had remained hidden for decades.

Researchers have gotten pretty good at proving programs secure. In the last 5-or-so years alone there have been papers published about provably secure operating system kernels, TLS implementations, conference management systems, social media platforms, cryptographic algorithms, and many more.

It is high time, therefore, to take a step back and cast a critical eye on the role of using formal proof to develop secure software systems.

With my co-author Paul van Oorschot, this is exactly what we did in a recent paper, which appeared at the 2018 IEEE Cybersecurity Development Conference (SecDev). We were delighted when this paper was recognised with a Best Paper award.

The Value of Proofs

In the paper we show how there remains disagreement about what is the precise value of carrying out a proof of security for a piece of software. Some people, for instance, have argued that proofs provide qualified guarantees of security, meaning that a sound proof guarantees that a program enjoys certain security properties, so long as all of the proof’s assumptions hold in reality. A good example of this point of view is the excellent writings on the seL4 proofs.

All proofs make assumptions, for instance about the observational or computational capabilities of the attacker, or that the hardware on which the software executes won’t malfunction, or that the software will be correctly deployed and used. These assumptions are encoded either explicitly or implicitly when we represent the program’s behaviours, and the actions of the attacker, in logic. All of the reasoning that we do during a security proof rests on these assumptions, and the conclusions of that reasoning apply to the real world (i.e. to the software in practice) only to the degree that the assumptions hold in reality.

Under this view, it is worth considering what value a proof has if its assumptions do not hold in practice. That is still an open question, which we highlight in the paper. By considering examples from the literature, we also show the difficulty—even for experts—of discerning the assumptions that underpin real-world proofs, and of ensuring that they hold in reality.

Another view on the value of a proof is that it lies not in trying to assure any guarantees but instead in forcing a very careful exploration of the software. This point of view is exemplified by various commentators online. Under this view, even partial (incomplete) proofs might have value.

We also briefly consider the commercial value of proofs, which includes marketing and product-differentiation.

Along we way we highlight the vast disconnect between what formal methods practitioners (i.e. the people who do these kinds of proofs) believe and what outsiders believe are the value of security proofs. One only has to consider the press coverage that surrounds formal verification results to see this: experts tend to be circumspect when talking about what their proofs deliver, while outsiders are prone to making overly strong claims about “invulnerable” and “hack-proof” software. This disconnect should be taken into account when experts communicate about formal proofs, especially to the public.

We argue that this disconnect suggests that one should choose their language carefully when talking about formal methods. To quote John Rushby, a pioneer of formal methods for security,

“Engineers in established fields use applied mathematics to predict the behavior and properties of their designs with great accuracy. … [T]he applied mathematics of software is formal logic, and calculating the behavior of software is an exercise in theorem proving.”

Under this analogy, I might equate proving the security of a piece of software with using applied mathematics to reason about the robustness of a bridge design. But, we argue, when was the last time you heard a civil engineer say that they proved or formally verified a bridge? It is, perhaps, not surprising that the public gets the wrong impression about the value of formal methods when we as researchers continue to use language like “proof” and “verification”—language that tends to imply 100% certainty—that is at odds with other engineering disciplines.

Side Effects

The paper also introduces, for the first time, the idea of proof side effects. When carrying out a proof, we might modify the software that we are proving secure. Sometimes this is to fix security vulnerabilities uncovered by the proof. However, in other cases, software is often modified during the proof because it makes carrying out the proof easier. For example, by simplifying the code it might become easier to reason about. The story from the beginning of this wonderful blog post, from the seL4 group on the interaction between their kernel developers and proof engineers, is a perfect example.

Of course, no formal definition of security is perfect. When I prove a piece of software secure, I am really proving that it meets some formal definition of security. That definition might imply that certain kinds of attack cannot succeed (for instance, buffer overflows) but it might not rule out other kinds of attacks, especially if those other kinds of attacks are below the level of abstraction of the formal model of the program’s behaviours that the proof reasons about. A good example is timing side-channel attacks, which are very difficult to reason about with proof because most formal models of program behaviours do not talk accurately about time.

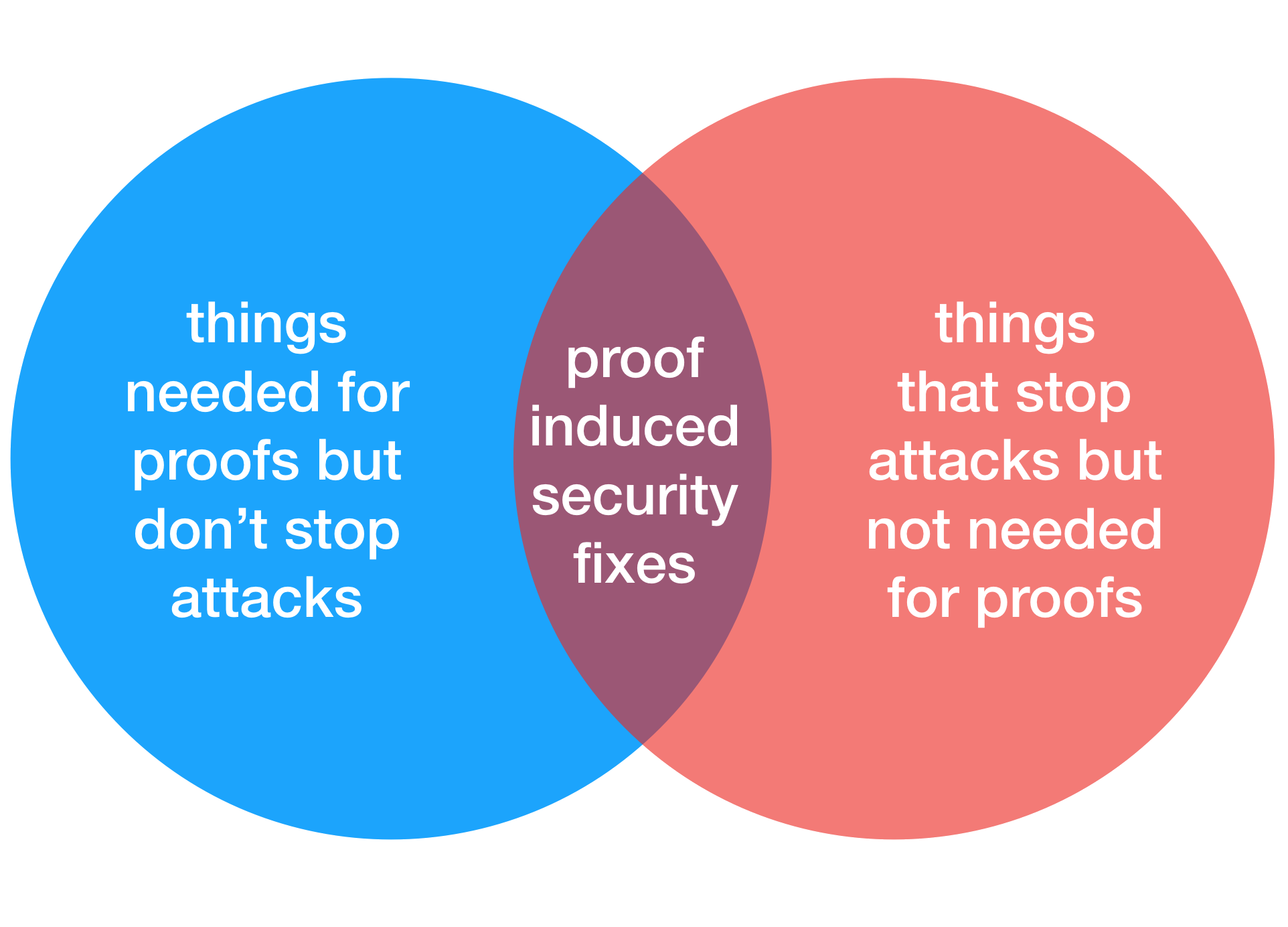

That means that there can always be other changes I wish I had made to my software, but that my proof did not mandate. This realisation allows us to consider the kinds of changes that I might make to my software, either because they help the security proof or because they actually improve security, or both. We can draw this distinction graphically as a Venn diagram.

Here, the blue circle contains all changes I might make to the software the are needed to help the proof succeed. The red circle contains all changes that will improve the software’s security (i.e. stop an attack). The purple overlap contains all the security fixes that I implement while carrying out the proof. Everything in the blue circle outside the purple one is a change I have to make to my software that doesn’t actually improve its security. We refer to these changes as side effects of the proof.

We should note that the figure above is almost certainly wrong, in that we have no idea what is the relative size of each of the circles, nor of their overlap. But this is the critical point: no one else has any idea either, and moreover, no one seems to have given it much thought (as far as we can tell). We believe that understanding the relationship between proof side effects and changes that stop attacks is an important open question.

In the worst case these side effect changes might even weaken some aspect of my software’s security. As an example, if my proof cannot reason about timing channels, I might simplify my software’s code (e.g. transforming constant-time cryptographic code into more readable code that, foolishly, branches on secret data) and unwittingly introduce a timing channel that nonetheless enables me to finish my security proof. That means that the blue circle outside of the purple overlap can actually contain vulnerability-inducing changes. This is something of a scary prospect for us proponents of formal security proofs.

Avoiding this kind of scenario of course requires careful attention to detail, making sure you get your formal security definition correct and that you employ other complementary assurance mechanisms to help rule out classes of attack that your proof doesn’t talk about.

Deployment Restrictions

One important class of proof side effect is when you are forced to disable useful functionality in your product in order to ensure that your proof’s assumptions hold in practice. We refer to these kinds of assumptions as deployment restrictions.

A good example is the assumption of the seL4 information flow security proof that all non-timer device interrupts are permanently disabled, which forces all device drivers to poll for interrupts. This assumption, and the associated deployment restriction, is needed and entirely reasonable because mainline seL4 does not isolate device interrupts between separate security partitions.

However, suppose I don’t care about information leakage due to interrupt-preemption. Would seL4 provide that weaker form of isolation if I kept device interrupts enabled? As somebody who has studied seL4’s information flow properties deeply, I’m fairly sure that the answer is “yes”. However, seL4’s information flow security theorem doesn’t provide a lot of help in deciding, because (at least in its current form) it applies only to the stronger form of isolation that requires that interrupts are disabled.

In the paper we also consider the related issue that arises when one creates a formal model of the software being verified that intentionally differs from the software in reality, and what guarantees the proof provides in that case.

Open Questions

All of this discussion leads to a number of open questions, which we believe are worthy of further study to better understand the value and costs of formal proofs as a security assurance mechanism.

We already mentioned a couple, such as how we might weigh the value of a proof if one of its assumptions does not hold in reality, and how we might determine the proper relationships between the various sets in the Venn diagram above. Others include how we should better communicate information about the assumptions that underpin proofs, and how we might better track and validate them.

I’m sure there are many more. Ultimately, by raising these questions our hope is to improve the understanding of proofs as a unique means of security assurance. By doing so, the power of this technology can be better harnessed for building secure system. This paper is yet another small step on that journey.